Gustave Doré Over London — By Rail 1872 (woodblock)

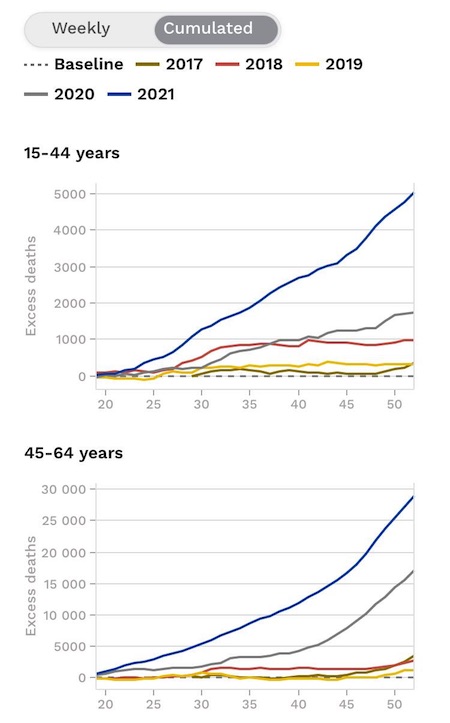

Baby myocarditis

https://twitter.com/i/status/1678475139841662996

Luft

https://twitter.com/i/status/1678528304616226816

Senator Ron Johnson says Gal Luft, who was just indicted by the DOJ, should be granted immunity to testify before Congress about the criminal behavior he witnessed by the Biden family.

Who agrees? pic.twitter.com/yXHKVoWW07

— Benny Johnson (@bennyjohnson) July 10, 2023

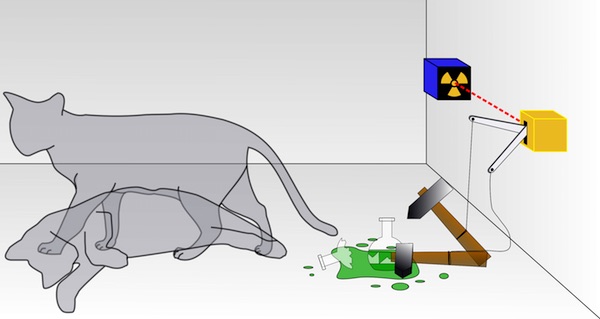

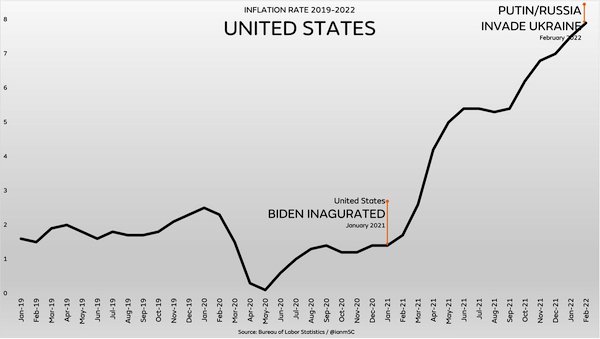

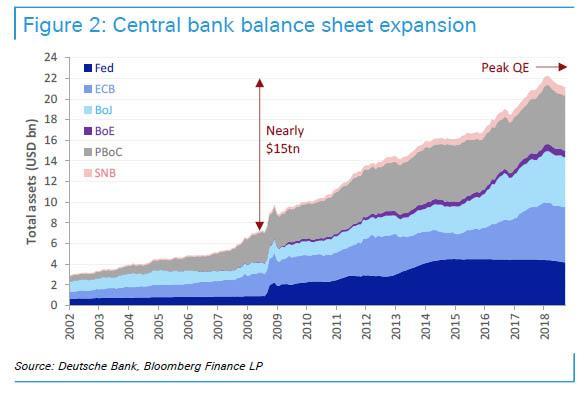

BRICS

BRICS+ is a collection of nations that say enough is enough with endless US wars, financial instability and US Govt money printing on the backs of other nations causing inflation around the world. BRICS+ will end the US Govt Ponzi scheme without a war.pic.twitter.com/TKVkpk2Cdi

— Kim Dotcom (@KimDotcom) July 10, 2023

“..mutual assistance, deep integration, innovation and pioneering, as well as mutual benefit.”

• China Ready To Contribute To Build Stable World Jointly With Russia – Xi (TASS)

Beijing is ready to join efforts with Moscow to contribute to building a prosperous, stable and just world order, Chinese leader Xi Jinping said on Monday. “China is ready to continue working with Russia <…> in order to contribute to developing and reviving both countries as well as to build a prosperous, stable and just world order,” China’s CCTV quoted Xi as saying at a meeting with Russian Federation Council Speaker Valentina Matviyenko in Beijing. Xi said that, in the new era, his country was ready to continue developing the comprehensive partnership and strategic cooperation with Russia which imply “mutual assistance, deep integration, innovation and pioneering, as well as mutual benefit.”

“That’s one she’s going to want to take back at some point, I’m certain.”

• 41+ Countries Returning To Gold Standard (QTR)

Remember back when the Russia/Ukraine war had just started, and I predicted that Russia and China would launch their own gold backed currency? At the time, this idea sounded completely foreign, and I was ridiculed for bringing it up. Today, it just become reality. 41+ countries look like they could be returning to a gold standard. The images plastered all over RT this weekend had headlines like “New Money, New World” and “Gold Standard Will Be Of Great Benefit To Strengthening New Singly Currency”. “The official announcement is expected to be made during the BRICS summit in August in South Africa,” Kitco reported over the weekend. “At first glance, a new transaction unit, backed by gold, sounds like good money – and it could be, first and foremost, a major challenge to the US dollar’s hegemony,” Thorsten Polleit, chief economist at Degussa, said.

He continued: “For making the new currency as good as gold, a truly sound currency, it must be convertible into gold on demand. I am not sure whether this is what Brazil, Russia, India, China and South Africa have in mind. Using gold as money, the unit of account would be a true game changer, no doubt about it. It could lead to a sharp devaluation of many fiat currencies vis-à-vis the yellow metal (including the BRICS fiat currencies), and it could catapult up goods prices in terms of fiat currencies. It could be a shock to the global fiat money system. I am not sure that this is what the BRICS wish to achieve.” The official announcement of the new currency is expected in August during the BRICS summit in South Africa.

Even more shocking than the announcement is the cavalier attitude that United States Treasury Secretary Janet Yellen appears to be taking to the news. In statements I can only describe as completely delusional, Yellen said this weekend: “I just want to reiterate what I’ve said in the past, which is I think the United States can rest assured that the dollar is going to play the dominant role in facilitating international transactions and serving as a reserve currency in the years ahead. I don’t see that role being threatened by any development including the one that you’ve mentioned [BRICS common currency].” That’s one she’s going to want to take back at some point, I’m certain.

“’If it’s my gold then I want it in my country’”

• Anti-Russian Sanctions Spooking Countries Into Repatriating Gold Reserves (RT)

An increasing number of countries are bringing home their bullion reserves in the wake of unprecedented sanctions imposed by the West on Russia, Reuters reported on Monday, citing an Invesco survey of central bank and sovereign wealth funds.According to the report, widespread losses for sovereign money managers resulting from last year’s financial market rout made them “fundamentally”rethink their strategies amid fears of higher inflation and further geopolitical tensions. The survey showed that over 85% of the participating 85 sovereign wealth funds and 57 central banks believe that inflation will now be higher in the coming decade than in the last.

A “substantial share” of central banks were reportedly concerned by the precedent set by sanctions on Russia. Almost 60% of respondents said it had made gold more attractive, while 68% were keeping reserves at home, compared with 50% in 2020. “’If it’s my gold then I want it in my country’ (has) been the mantra we have seen in the last year or so,”according to Invesco’s head of official institutions, Rod Ringrow, who oversaw the report. Confirming Ringrow’s assessment, one central bank told Reuters anonymously: “We did have it (gold) held in London… but now we’ve transferred it back to [our] own country to hold as a safe haven asset and to keep it safe.”

Nearly half of Russia’s $640 billion worth of gold and forex reserves were frozen by the West last year, included in numerous rounds of sanctions over the conflict in Ukraine. Moscow has condemned the freezing of its assets, describing the West’s plans to expropriate those funds as theft and warning of retaliatory measures. The Ivesco study showed that geopolitical concerns, combined with opportunities in emerging markets, have also triggered a shift among some central banks away from the US dollar. Of those surveyed, 7% reportedly believe that mounting US debt is also negative for the greenback, but most still see no alternative to the dollar as the world’s reserve currency.

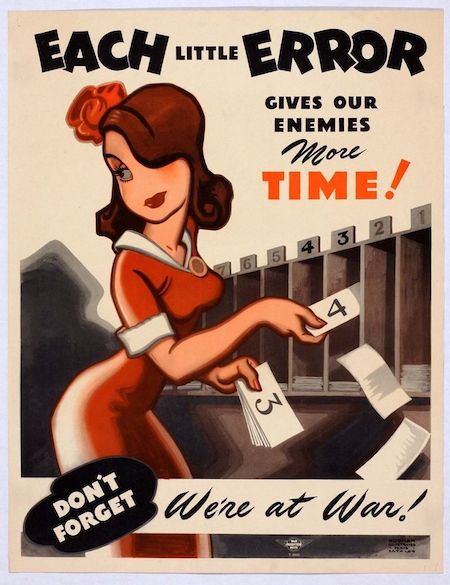

The military industrial complex is making a killing.

• US, Europeans Depleted Their Weapons Stockpile Fighting Russia in Ukraine (Sp.)

The US and Europe may have the military budgets which far outstrip those of their global rivals, but there are multiple factors helping to explain their depletion and inability to expand production, retired. Major General Vallely, the chairman of the Stand Up America Foundation, a US advocacy and non-profit, told Sputnik. “As you know, when the Americans surrendered in Afghanistan, we left $85 billion-worth of equipment, ammunition, guns, tanks, helicopters, artillery. $85 billion is a lot. That would arm an entire country for years,” Vallely explained. Secondly, the US defense sectors “sells a lot of equipment to other countries – some 50 different countries,” which means not all its resources can be concentrated on one task, like flooding arms into Ukraine.

“And the third reason is the amount that we have sent into Ukraine, including what we called ‘prepositioned’ equipment in Europe. Those resources are depleted. So the American defense industry can’t keep up with demand now. We don’t have the manufacturing capability to keep up with what’s being used and what is needed in the future. The same goes for the European countries,” the senior officer said, pointing out that much of the weapons and equipment given to Kiev over the past year-and-a-half have been destroyed. Vallely also pointed to political, economic and social factors to account for the shortfall, saying many NATO countries, particularly in Europe, “are sort of reluctant” to keep pumping their military equipment into Ukraine with no end in sight.

Instead, they’ve actually “slowed down their production, I think, and also the amount of supplies that they’re giving Ukraine, because they want to keep a lot of that, at least some for themselves. So those are all reasons why we see this shortage and it’s going to continue,” he said. Major European NATO powers such as Germany, Italy and the UK have spent months warning that the stocks of weapons they have left after splurging on Ukraine would be enough to withstand just “48-72 hours” of combat in the event of a surprise invasion or other major conflagration. In the UK, defense officials complained privately back in January that the military had been “hollowed out” by arms shipments to Kiev, and London’s reluctance to up defense expenditures.

In spite of talk among NATO members about record defense budgets, Vallely doesn’t expect the alliance to be able to substantially ramp up production capabilities over the coming months and years. “I don’t think they want to. It costs the countries a lot of money to build this equipment. And you have an ailing economy in Europe and the United States. They can print all the money they want to, but still you have to build these things and it takes a lot of time,” he said. On top of that are resource constraints, including the rare-earth minerals the US has come to rely on China for but which Beijing has recently indicated it would be trimming down amid the ongoing trade war with Washington, Vallely said.

NATO must deliver this week. But all they have is words. Bonuspoint for the headline.

• Vaudevillian Visitors in Vilnius (Sp.)

The crisis in Ukraine is in many respects the consequence of the Western alliance’s decade-and-a-half long quest to pull Kiev into the bloc, despite repeated warnings by Russia that Ukrainian NATO membership was a “red line” for Moscow. The alliance first welcomed Ukraine’s “Euro-Atlantic aspirations for membership” at its 2008 summit in Bucharest, Romania. Two years later, the pro-Western government in Kiev was replaced in democratic elections by the government of Viktor Yanukovych, who pledged Kiev’s bloc neutrality. Four years after that, in 2014, Yanukovych was overthrown in a US-backed coup d’etat, with the crisis prompting Crimea to break off from Ukraine and rejoin Russia, and sparking a bloody conflict in Donbass. Moscow attempted to mediate the crisis over eight years through the Minsk peace accords, a comprehensive, 12-point peace deal calling for broad autonomy for Donbass as part of Ukraine.

Successive governments in Kiev dragged their feet on the Minsk deal’s implementation. In the meantime, in 2019, Ukraine’s parliament amended the country’s constitution to cement Kiev’s aspirations to join NATO and the European Union. In December 2021, as war clouds gathered over Donbass, Moscow attempted one final push to prevent the escalation of the Ukraine crisis into a Russia-NATO proxy conflict, proposing comprehensive security agreements to the US and the alliance to reduce tensions, including a request for a commitment from the Western alliance not to allow Ukraine to join the bloc. The alliance refused, saying it would never reject its “open door” policy. Several weeks later, amid Russian intelligence reports that Kiev would try to resolve the Donbass crisis by force, Moscow kicked off its special military operation.

“NATO member states cannot use security guarantees as an alternative to Ukraine’s membership in the bloc.”

• Zelensky Can Expect Military Aid Guarantees From NATO Summit at Best (Sp.)

Ukrainian President Volodymyr Zelensky can expect from the upcoming NATO summit in Vilnius on Ukraine’s admission to the alliance is guarantees of further military aid at best, Konstantin Gavrilov, the head of the Russian delegation at the military security and arms control talks in Vienna, said in an interview with Sputnik. Zelensky continues to bend his line that he needs Western weapons and military equipment under the pretext of a confrontation with Russia, the diplomat said, adding that is why Zelensky has long hinted that he would like an accelerated process of joining NATO. The UK and the US, however, have made it clear that the necessary criteria must be met for this.

“The best that Zelensky can expect [from the summit] is guarantees of further military assistance and supplies of ammunition,” Gavrilov said, adding that “the fact that a meeting of the NATO-Ukraine Council will be held during the NATO summit is just a nod to Kiev and nothing more.” On Monday Ukrainian Foreign Minister Dmytro Kuleba said that “NATO member states cannot use security guarantees as an alternative to Ukraine’s membership in the bloc.” The Lithuanian capital city of Vilnius will host the NATO summit from July 11-12. NATO Secretary General Jens Stoltenberg will chair the meeting. Discussions on Ukraine’s NATO prospects, strengthening the alliance’s eastern flank and defense spending are expected to top the summit’s agenda. On June 19, Stoltenberg said that the summit would not discuss a formal invitation, but rather ways to “move Ukraine closer to NATO.”

War is peace.

• Ukraine In NATO Is ‘Path To Peace’ – FM (RT)

Inviting Kiev into NATO will create peace in Europe, Ukrainian Foreign Minister Dmitry Kuleba argued on Monday, during an interview with the German public broadcaster ARD. Kiev was “promised entry” in 2008, but Germany and other Western European countries got cold feet, Kuleba told the Tagesschau newscast on Monday. The upcoming Vilnius summit of the US-led military bloc is the time to correct that “mistake,” he added.“We appreciate everything that Germany and the US are doing for Ukraine to support us in this war,” Kuleba added, but disagreed with Berlin that the invitation into NATO would drag the West into open war with Russia. The invitation is simple “a political message to Ukraine” and Article 5 – the mutual defense clause – “only applies if Ukraine is a member country.”

“Ukraine’s NATO membership is a path towards peace. Once Ukraine can join NATO, there will be no more wars in Europe,” Kuleba claimed, because “Russia will no longer dare to attack the alliance.” Although Kiev’s bid is strongly supported by the Eastern European and Baltic states, the leaders of Western Europe and the US have opposed making Ukraine a full-fledged NATO member. In a CNN interview over the weekend, US President Joe Biden reiterated that Washington is against admitting Ukraine into NATO and offered “Israel-like guarantees” instead. “Why does everyone always want to put Ukraine in a separate pot?” lamented Kuleba. “Why are people always looking for alternative solutions? Why can’t we simply develop a holistic solution for a European security architecture in which Ukraine sits in the NATO boat?”

He went on to insist that Ukraine is “a win” and not a burden, because “without our army, the eastern flank [of NATO] could not be defended at all.” Kiev will not accept guarantees as a replacement for membership, Kuleba added, but only as an interim measure. The US and its allies have spent over $100 billion just in 2022 to provide Ukraine with weapons, equipment, ammunition and even cash, while insisting they were not a party to the conflict with Russia. Ukrainian Defense Minister Alexey Reznikov has argued on multiple occasions that his country was already “de facto” a member of the Western alliance and “carrying out NATO’s mission” with theblood of Ukrainian soldiers.

No NATO membership for Ukraine was one of the key demands in Russia’s collective security proposal sent to Washington and Brussels in December 2021. Biden also revealed that Russian President Vladimir Putin had presented that condition at their July 2021 summit in Switzerland – but that he refused, citing NATO’s open-door policy. Moscow considers NATO’s eastward expansion as a threat to Russia’s national security and has cited Kiev’s ties to the bloc as one of the root causes of the current armed conflict with Ukraine. Russian officials have argued that Ukraine’s neutrality would be one of the necessary prerequisites for a lasting peace between the two countries.

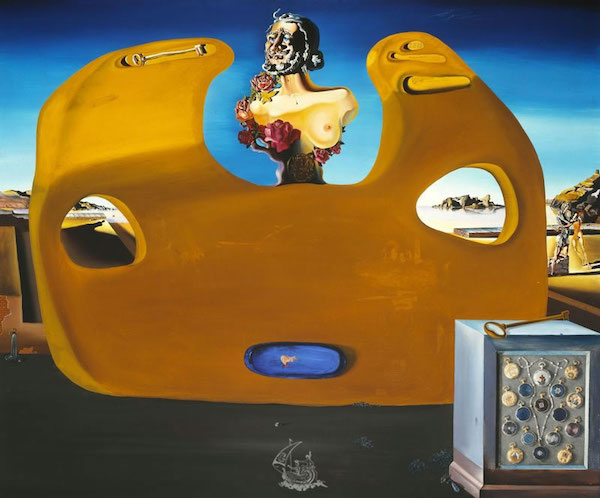

Yup, more Orwell.

• US State Dept Has Become ‘Ministry Of Truth’ – Moscow (RT)

US authorities are urging the country’s media outlets to propagate falsehoods about Russia in a bid to undermine its stability amid the ongoing stand-off between Moscow and the West, the Foreign Intelligence Service (SVR) has claimed. In a statement on Monday, the agency quoted its director, Sergey Naryshkin, as saying that “the US State Department … basically dictates to the American media what exactly they should write and say,” adding that it “has finally turned into the ‘Ministry of Truth’.” The comment was an apparent reference to the fictional ministry tasked with falsifying historical events from George Orwell’s world-renowned dystopian novel ‘Nineteen Eighty-Four’.

The SVR, citing intelligence data, claimed that last month the department sent instructions to several major media holdings, including AT&T, Comcast Corporation, Graham Media Group, Nash Holdings, Newsweek Publishing and The New York Times Company, telling them to “reflect events in and around Russia in a distorted manner.” According to the agency, these outlets were tasked with convincing Russian citizens that there was a need for a “forceful struggle against the authorities, up to an armed rebellion.” They also wanted to “involve the population in protest actions” by “actively circulating falsehoods” about Russia’s purported weakness and its “inevitable defeat in the stand-off with the West,” the statement read.

To achieve this goal, Washington has told media organizations to focus on packaging certain narratives to young Russians, as well as hailing Russian opposition figures and other nationals engaged “in sabotage and terrorist actions” against Moscow as heroes, the agency claimed. “There is nothing new in freedom of speech being trampled on in the West. It is unfortunate that the State Department, which used to be a sober-minded and rational agency … has turned into a stinking landfill of informational garbage,” the SVR added. The statement comes after Kremlin Press Secretary Dmitry Peskov predicted in April that the level of “external interference” into Russia’s domestic affairs would only grow amid the Ukraine conflict. He also suggested that the West would be interested in derailing Russia’s presidential elections, which are scheduled for March 2024.

The EU will never accept a muslim nation as a member.

• Erdogan Ties Sweden’s NATO Bid To Türkiye’s EU Membership (RT)

Ankara will sign off on Sweden’s accession to NATO if the EU “opens the way” to membership for Türkiye, Turkish President Recep Tayyip Erdogan said on Monday. Erdogan has reportedly asked US President Joe Biden to help pressure Brussels into accepting Türkiye into the European bloc. “Türkiye has been waiting at the door of the European Union for over 50 years now, and almost all of the NATO member countries are now members of the European Union,” Erdogan told reporters before flying to NATO’s summit in Lithuania. “Come and open the way for Türkiye’s membership in the European Union. When you pave the way for Türkiye, we’ll pave the way for Sweden as we did for Finland,” he added.

Sweden, Finland, and Türkiye agreed last summer that Ankara would approve the two Nordic nations’ applications for NATO membership if both granted a number of key concessions to Ankara. Sweden and Finland promised that they would lift arms embargoes on Türkiye, extradite alleged Kurdish and Gulenist terrorists, and crack down on the activities of the Kurdistan Workers’ Party (PKK) within their borders. Türkiye’s parliament signed off on Finland’s application in March, but Erdogan maintains that Sweden hasn’t fulfilled its end of the bargain. In a phone call with Biden on Sunday, the Turkish president claimed that Sweden still allows “terrorist organizations” – referring to the PKK – to demonstrate on its streets, according to a readout of the call from Ankara.

Erdogan also told Biden that Türkiye expects a “clear and strong message of support” from NATO leaders for its EU membership at this week’s summit in Lithuania. A readout of the call from the White House made no mention of this request. Erdogan is set to hold talks with Biden and Swedish Prime Minister Ulf Kristersson while in Lithuania. sAsked about Erdogan’s comments, NATO Secretary-General Jens Stoltenberg told the Associated Press that Türkiye’s accession to the EU was not a part of the deal that Sweden, Finland, and Türkiye signed last year.

Stoltenberg insisted that Sweden has fulfilled its obligations, and that it is “still possible to have a positive decision” on its accession to the military bloc during this week’s summit. Türkiye applied for EU membership in 1987 and was recognized as a candidate in 1999. Membership negotiations opened in 2005, but progress was slow, and no talks have taken place since 2016. Brussels has since condemned Erdogan over alleged human rights abuses, and the Foreign Affairs Committee of the European Parliament warned in a 2017 report that constitutional reforms strengthening his powers could run afoul of EU law and threaten Ankara’s membership bid.

“Putin listened to the explanations of the commanders and offered them further employment options..”

• Putin Held Meeting With Prigozhin, Wagner Commanders June 29 -Kremlin (Sp.)

Russian President Vladimir Putin held a meeting in the Kremlin with Yevgeny Prigozhin, the head of the Wagner PMC and the group’s commanders to discuss the events of June 24, Kremlin spokesman Dmitry Peskov said on Monday. “Indeed, the president had such a meeting. He invited 35 people to it. All group commanders and company management, including Prigozhin. This meeting took place in the Kremlin on June 29 and lasted almost three hours,” Peskov told a briefing. The details of the meeting are confidential, but both Putin and Wagner commanders gave an assessment of the June 24 events, the spokesman said.

“The only thing we can say is that the president gave an assessment of the company’s actions at the front line during the special military operation, and also gave his assessment of the events of June 24. Putin listened to the explanations of the commanders and offered them further employment options,” Peskov said. Commanders of Wagner told Putin that they are his staunch supporters and are ready to continue fighting for Russia, the spokesman concluded. On June 23, forces of the Wagner Group (PMC) seized the headquarters of Russia’s Southern Military District in the city of Rostov-on-Don, following accusations leveled against the Russian Ministry of Defense for allegedly striking the group’s camps. Both the Russian military and the Federal Security Service have denied the allegations.

The next day, Belarusian President Alexander Lukashenko revealed that he had spent the entire day negotiating with Yevgeny Prigozhin, as agreed upon with Russian President Vladimir Putin. As a result of the talks, the Wagner group leader accepted Lukashenko’s proposal to stop the movement of his troops in Russia and take measures to de-escalate the situation. Putin guaranteed that the Wagner group fighters would have the opportunity to sign contracts with the Ministry of Defense of the Russian Federation, return home, or move to Belarus.

“..Western governments and pundits clearly suffered more embarrassment from this episode than Putin did..”

MacGregor disagreed that the former Wagner chief was in cahoots with Russia’s enemies. He said: “I see no evidence that Mr. Prigozhin was made an agent by MI6 or the CIA or anybody else. Anybody who knows the Russians knows that any senior officer or commander or leader is surrounded by numerous FSB informants. The idea that he could have sold out even if he wanted to seems ludicrous.” Ritter pointed out in his CN piece that the Russian government is investigating the matter. If Prigozhin was indeed working for Western or Ukrainian intelligence they clearly did not get what they paid for. Lessons: For Russia: Don’t repeat the mistake of hiring a private army. Several analysts pointed to a 500-year old lesson from Niccolo Machiavelli that Russia ignored:

“Mercenaries and auxiliaries are useless and dangerous; and if one holds his state based on these arms, he will stand neither firm nor safe; for they are disunited, ambitious and without discipline, unfaithful. … I wish to demonstrate further the infelicity of these arms [i.e., mercenaries]. The mercenary captains are either capable men or they are not; if they are, you cannot trust them, because they always aspire to their own greatness, either by oppressing you, who are their master, or others contrary to your intentions; but if the captain [i.e., the leader of the mercenaries] is not skillful, you are ruined in the usual way [i.e., you will lose the war].”

MacGregor disputed the whole idea. He told Galloway: “I reject the notion that these people are mercenaries. I would compare them to the French Foreign Legion. The French Foreign Legion consists of large numbers of non Frenchmen in many cases, but they have sworn allegiance to the French state and the French nation, and no one has fought harder and more loyally for France than the French Foreign Legion. I would say you have something very similar in the Wagner group.= These are still Russians overwhelmingly, but there are numbers of Serbs or some Germans or others in the group, and they too have sworn allegiance to the Russian state. And as far as we can tell, none of them thought that they were marching on Moscow to remove Putin.

On the contrary, they saw themselves as going to Moscow to rescue Putin from what was widely considered bad advisors, bad councilors who have held up the Russian offensive and caused this war to drag out beyond the point of reason.” Whether they were mercenaries or not, the Kremlin and the MOD tried to get away with a dodgy legal maneuver and it caused them international embarrassment and nearly a bloody civil conflict. Lessons: For the West: Wait until an operation is over before popping the corks. Cries about a Russian civil war being under way, such as tweets from former U.S. Ambassador to Russia Michael McFaul, which blared that “The fight is now on. This is now a civil war,” blew up in their faces when Prigozhin turned tail.

The bigger lesson would be not to meddle in other nations’ internal affairs but that would be too much to ask. The entire Russian nation had rallied around Putin, leaving him in a much stronger position, exposing the continuing line that Russia is now a dangerously unstable nation. Western governments and pundits clearly suffered more embarrassment from this episode than Putin did. But ideologues rarely learn any lessons.

A soft warning. China speaks in whispers.

• US Cluster Munitions to Ukraine Can Lead to Humanitarian Problems – China (Sp.)

Last week, the US unveiled a new military assistance package for Ukraine that includes cluster munitions. The weapons are banned by the Convention on Cluster Munitions, which has been ratified by 123 countries, excluding the US and Ukraine. Supplies of cluster munitions by the United States to Ukraine may lead to humanitarian problems, Chinese Foreign Ministry spokeswoman Mao Ning said on Monday. “Many countries have spoken out against it [cluster munition supplies], irresponsible transfers of cluster munitions can easily lead to humanitarian problems. Humanitarian issues and military security issues must be dealt with in a balanced manner, and the transfer of cluster munitions must be treated with restraint and caution,” Mao told reporters.

“..a recruitment drive is underway in Argentina, Brazil, Afghanistan, Iraq, and areas of Syria that are “under American control.”

• Ukraine Stepping Up Mercenary Recruitment Effort – Russian MOD (RT)

Ukrainian units formed from foreign mercenaries have suffered high battlefield casualties, forcing Kiev to change its approach to finding hired fighters, the Russian Defense Ministry claimed on Monday. In a statement, the ministry alleged that the Ukrainian military command views foreign fighters as cannon fodder, sending them into the riskiest missions and placing them at the back of the line when it comes to the evacuation of injured troops. This has made recruiting fighters in European countries such as Poland much harder for Kiev, the assessment claimed. Ukraine has consequently ramped up efforts to find candidates in other parts of the world, according to the Russian Defense Ministry, which stated that a recruitment drive is underway in Argentina, Brazil, Afghanistan, Iraq, and areas of Syria that are “under American control.”

The reference to Syria likely concerns the predominantly Kurdish northeastern part of the country. The US supplied Kurdish militias with weapons, training and air support when they served as ground forces for the Washington-led coalition in the fight against Islamic State (IS, formerly ISIS). The Pentagon also maintains a military base in eastern Syria, despite the objections of the government in Damascus. The Russian Defense Ministry further claimed that Ukraine’s diplomatic missions in the US and Canada are actively hiring fighters with the help of the CIA and private military contractors under its influence.

Ukraine created the so-called International Legion for what it describes as foreign volunteers fighting for its cause. Moscow considers the fighters to be mercenaries and has repeatedly said that the regular laws of war do not apply to them. The Russian Defense Ministry’s assessment on Monday put the number of foreign mercenaries currently employed by Kiev at 2,029. There are numerous accounts from foreign fighters describing the perils of joining the Ukrainian ranks, including mismanagement by military leaders, corruption with logistics, and the overwhelming risks of engaging Russian forces.

I’m seeing Orwell eveywhere today:

“..they were unwilling to allow the safety of others “to go to the winds so that people can speak freely.”

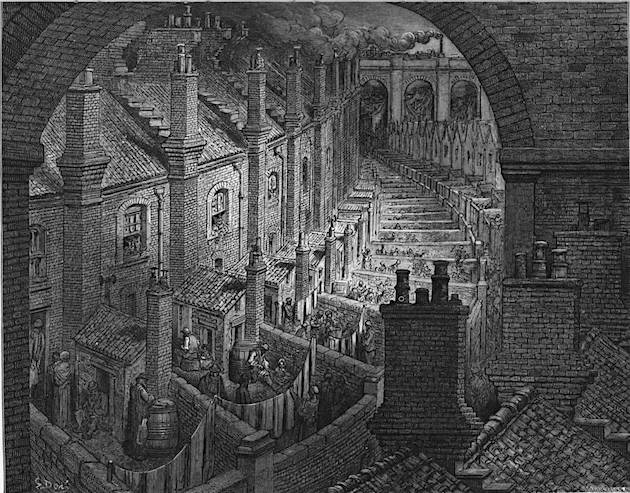

• Welcome to Zuckerberg’s Vision of Internet “Kindness” (Turley)

“Threads’s rollout coincides with a court ruling that the government’s interventions to censor people on social media represented “the most massive attack against free speech in United States history.” Now, Facebook is offering an alternative to Twitter, with the assurance that users will be protected against any thoughts that Meta’s staff finds problematic. While free speech on Twitter is portrayed as harmful, the company has promised to “prioritize kindness.” That sounds eerily familiar to some of us as a way to deprioritize free speech. Recently, former Twitter executive Anika Collier Navaroli testified on how she and her staff would remove anything they considered “dog whistles” and “coded” messaging. Rather than using “kindness,” Twitter used undefined standards of “safety” to cancel free speech.

Navaroli declared that they were unwilling to allow the safety of others “to go to the winds so that people can speak freely.” Facebook has long tried to get the public to embrace its role as some kind of speech overlord. Years ago, Facebook rolled out an Orwellian commercial campaign to get the public to embrace censorship. The commercials showed young people heralding how they grew up on the internet and how the world was changing, creating a need for censorship under the guise of “content moderation.” Facebook, they promised, was offering the “blending of the real world and the internet world.”

Facebook is not alone in trying to get people to accept censorship. Recently, after the court ruling, various figures assured the public that they are better off letting corporate and government censors protect them from harmful thoughts. On CNN, Chief White House Correspondent Phil Mattingly went so far as to state that it simply “makes sense” for tech companies to go along with government censorship demands. After this week’s decision, the New York Times immediately issued a panicky tweet that the resulting outbreak of free speech could “curtail efforts to combat disinformation.” For his part, Zuckerberg prefers to just offer “kindness” and “sanity” with few details. Of course, there is a very simple way for Zuckerberg to show that he is committed to free speech: He can release the Facebook Files.

One of the reasons many of us in the free-speech community still support Musk is that he transformed the debate over government censorship by releasing the Twitter Files. For years, politicians and pundits dismissed objections from some of us to government-corporate coordination of censorship as unproven. In Congress, Democratic members attacked witnesses for supposedly lacking proof of censorship, even as they fought to block any investigation that might uncover that evidence. Musk changed all that by showing the public an extensive network of government interventions to support censorship and blacklisting of private citizens. Much of what we know today is derived from the Twitter Files, but surely there is more to learn.

“The poorest man may in his cottage bid defiance to all the forces of the crown. It may be frail – its roof may shake – the wind may blow through it – the storm may enter – the rain may enter – but the King of England cannot enter.”

• UK Parents Prosecuted for Refusing to Pay for Transgender Treatments? (Turley)

According to the UK’s Code for the Crown Prosecution Service (CPS), abusive conduct now includes “withholding money for transitioning [and] refusing to use their preferred name or pronoun.” So a parent with familial or religious objections to the transitioning of a child would be required under the law to fund operations or treatments. According to the guidance material, this is not even “an exhausted list,” but some of the first “examples” of potentially criminal conduct that comes to mind. The guideline would suggest that parents with deep-seated religious convictions against transgender status would either have to fund an operation that they consider immoral or face arrest for failing to do so.

To potentially prosecute a parent for refusing to use an adopted pronoun of their child is chilling and wrong. Nevertheless, a CPS spokesperson doubled down with a comment to Fox News that “domestic abuse is a severe crime and leaves victims with a lasting impact . . . This assists prosecutors to ensure that any victim, regardless of who they are, can get justice for the abuse they have faced.” This follows the erosion of free speech and religious rights in Britain, including English courts upholding the criminalization “toxic ideologies.” It was Sir Edward Coke in The Institutes of the Laws of England, 1628 who declared “For a man’s house is his castle, et domus sua cuique est tutissimum refugium [and each man’s home is his safest refuge].” William Pitt, the first Earl of Chatham later added:

“The poorest man may in his cottage bid defiance to all the forces of the crown. It may be frail – its roof may shake – the wind may blow through it – the storm may enter – the rain may enter – but the King of England cannot enter.” That no longer appears the case when misusing pronouns or failing to write a check for a child’s transitioning, which will now be treated as the same as physical child abuse. As the definition of abuse is broadened, the state derives greater control and direction over family affairs and relations. Moreover, leaving enforcement to the discretion of police in this “nonexhaustive” list only further undermines this long-standing protection over internal family matters. The question is what the limiting principle will be as the state defines a wider array of conduct to be child abuse. The default assumption of Pitt appears to have flipped in the United Kingdom.

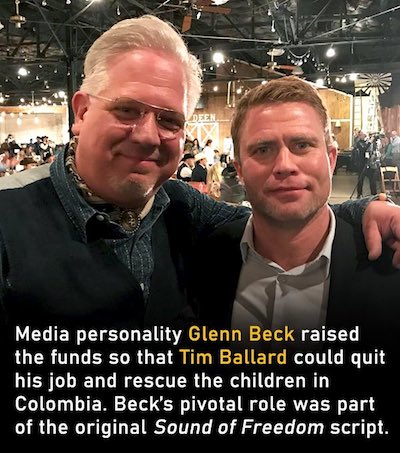

RFK

https://twitter.com/i/status/1678544638334967809

Support the Automatic Earth in virustime with Paypal, Bitcoin and Patreon.